On the morning of May 12, Sam Altman opened X at 5:34 AM and announced Daybreak — OpenAI's effort to put frontier models to work scanning, triaging, and patching software vulnerabilities. The post was short and confident. AI is already good at cybersecurity, the message ran, and about to get a lot better, and OpenAI would like to start working with as many companies as possible. By breakfast time in San Francisco the press cycle was in motion, and by the time most Australian developers had finished their second coffee, Daybreak had its own landing page at openai.com/daybreak, three flavours of GPT-5.5, and a roster of partners that read like a who's-who of enterprise security: Cloudflare, Cisco, CrowdStrike, Palo Alto Networks, Oracle, Zscaler, Fortinet, Akamai.

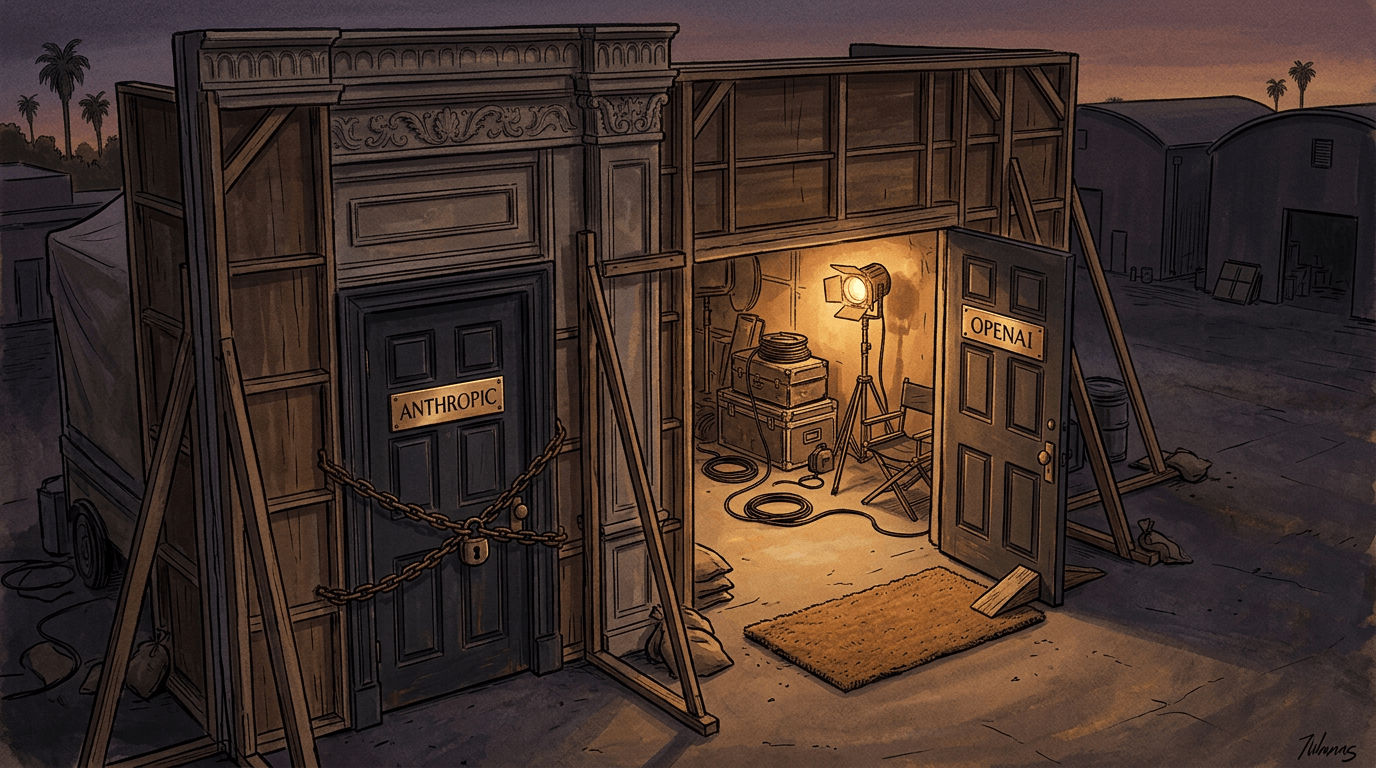

The interesting thing about this announcement is not Daybreak itself. The interesting thing is the door it walked through, and the door it left ajar behind it.

A few weeks earlier, Anthropic had introduced its own contender in the same space: Claude Mythos, wrapped in something called Project Glasswing. The framing was almost theatrical. Mythos, Anthropic's accompanying technical post explained, had already uncovered thousands of high-severity vulnerabilities across every major operating system and web browser. It was, in their own words, the most cyber-capable model they had ever built. And precisely because it was so capable, it would not be made publicly available. A handful of vetted partners — AWS, Apple, Cisco, Google, JPMorgan Chase, Microsoft — would get access. Everyone else would have to wait.

It was a careful piece of choreography. Frightening enough to suggest gravity, restricted enough to suggest responsibility. Bruce Schneier, writing on his blog shortly after the announcement, put it more bluntly than most: "This is very much a PR play by Anthropic — and it worked. Lots of reporters are breathlessly repeating Anthropic's talking points, without engaging with them critically." He suspected, too, that OpenAI's eventual response would be less about technical disagreement and more about not wanting to cede the spotlight. That prediction has aged well.

Daybreak is the answer to that performance, and the answer is essentially: come on in. Two buttons at the top of the page. Request a vulnerability scan. Contact sales. No invitation list, no sealed envelope, no vetted partners only. The blog post does not use the word "project" — perhaps deliberately, perhaps not. Gizmodo, in its coverage, noted the difference in atmosphere plainly: where Glasswing arrived with frightened governments scurrying behind the scenes, Daybreak arrived with a sales form.

Two doors. The same room behind them.

What sits in that room is worth examining slowly. Daybreak is built on three models — GPT-5.5, GPT-5.5 with Trusted Access for Cyber, and GPT-5.5-Cyber — and the orchestration layer is Codex Security, which OpenAI quietly released as a research preview back in March. The workflow is straightforward in description. Codex Security ingests a repository, builds an editable threat model of the system, looks for realistic attack paths, validates the most promising ones in an isolated environment, and proposes patches. Hours of analysis compressed into minutes. Audit-ready evidence returned to the customer's existing systems.

The pitch is fluent, and the underlying capability is real enough. AI models can now reason across large codebases in ways that genuinely help defenders. Mozilla and Anthropic reportedly used Mythos to find and patch 271 vulnerabilities in Firefox in April, which is the kind of concrete result that makes the broader claim land. The technology is not science fiction. The question is how much of the broader pitch survives contact with the actual work of running production software.

Several voices outside the partner press releases have started to sketch the edges of that question. Speaking to AI Business, one cybersecurity practitioner pointed out the obvious limitation of any code-centric approach: a great deal of what matters in security does not live in the code. It lives in how systems run in production, in identity exposure, in lateral movement, in the slow accumulation of misconfigurations that no static analysis can really see. LLMs, he noted, still produce a lot of false positives. Codex Security may build a beautiful threat model and still miss what an attacker would notice in the first ten minutes of reconnaissance.

This is not an indictment of the tool. It is a reminder that the tool is doing one slice of the job. The bottleneck in modern security is rarely "we cannot find vulnerabilities." It is "we cannot triage them fast enough, validate them confidently, and ship fixes without breaking things." Earlier this year, HackerOne paused its bug bounty program — not because researchers had stopped looking, but because AI-assisted submissions were arriving in such volume, with such plausibly-worded but hallucinated content, that maintainers could no longer keep up. The researcher Himanshu Anand summarised the new reality bluntly: when AI can turn a patch diff into a working exploit in thirty minutes, the ninety-day disclosure window is protecting nobody.

Daybreak sits inside that landscape, not outside it. It is one more system that finds things faster than humans can act on them. The validation step OpenAI emphasises — that Daybreak does not just identify, it confirms — is the most consequential part of the pitch, and also the part that is hardest to evaluate from the outside. Until there is a public benchmark of the kind Mozilla provided for Mythos, much of what we have is the marketing. Schneier's broader point on Glasswing is worth carrying across to Daybreak as well: both are, at heart, reactive approaches — racing to patch holes before attackers adapt — when the deeper challenge is moving toward systemic resilience rather than hoping to stay one patch ahead of the adversary.

There is a more quietly interesting story in the partner list. Cisco, CrowdStrike, and Palo Alto Networks have signed on to both Daybreak and Glasswing. They are hedging, which is the rational thing for a security vendor to do when two frontier labs are racing to define the category. The lesson for everyone watching is that the category is not yet defined, and the winners are not yet picked. Microsoft's Security Copilot and CrowdStrike's Charlotte AI have been doing recognisable versions of this work for some time. OpenAI and Anthropic are now arriving in a market that already exists, with better models and louder press cycles.

From where we sit — a small consultancy, building and maintaining real systems for real clients — the pragmatic posture is patience. The capability is genuine. The bottleneck the tools solve is real. The bottlenecks they do not solve are also real, and at the moment those include the most expensive parts of running a secure system: deciding what to patch, in what order, with what regression risk, on whose authority. None of that is in the announcement.

It is also worth noting what Daybreak is not. It is not a product you can switch on this afternoon. It is an enterprise programme, accessed by request, with sales conversations attached. For teams that want AI in their security workflow now, Codex Security on its own, or Claude Code with security-conscious prompts, or any of the established existing tools, are all closer at hand. Daybreak's promise is more ambitious — a continuously-secured pipeline, threat modelling built into the development loop — and that promise will be tested over the next six to twelve months by case studies that have not yet been written.

The most honest summary of the moment is this. Anthropic chose the gothic mode: a powerful thing, locked away, drip-fed to the worthy. OpenAI chose the storefront mode: same powerful thing, doors open, sales team waiting. Both are positioning, and both are real. The room behind the two doors is the same room. What gets built in it, and what gets broken by it, will matter far more than which door is currently fashionable.

For now, we are watching, reading the postmortems, and keeping a healthy distance between the press release and the production system.