On April 21, 2026, Odyssey AI quietly released what may be one of the most consequential AI announcements of the year — and it was not a chatbot. It was not a bigger context window. It was not even a video generator in the conventional sense.

It was a world model.

Odyssey-2 Max is a system that predicts the next state of physical reality, frame by frame, in real time, for as long as you keep interacting with it. That distinction — causal, autoregressive, interactive — separates it from nearly everything else that has shipped in the past two years of AI progress.

If you have been watching the AI space primarily through the lens of language models and video generators, Odyssey-2 Max is worth your full attention.

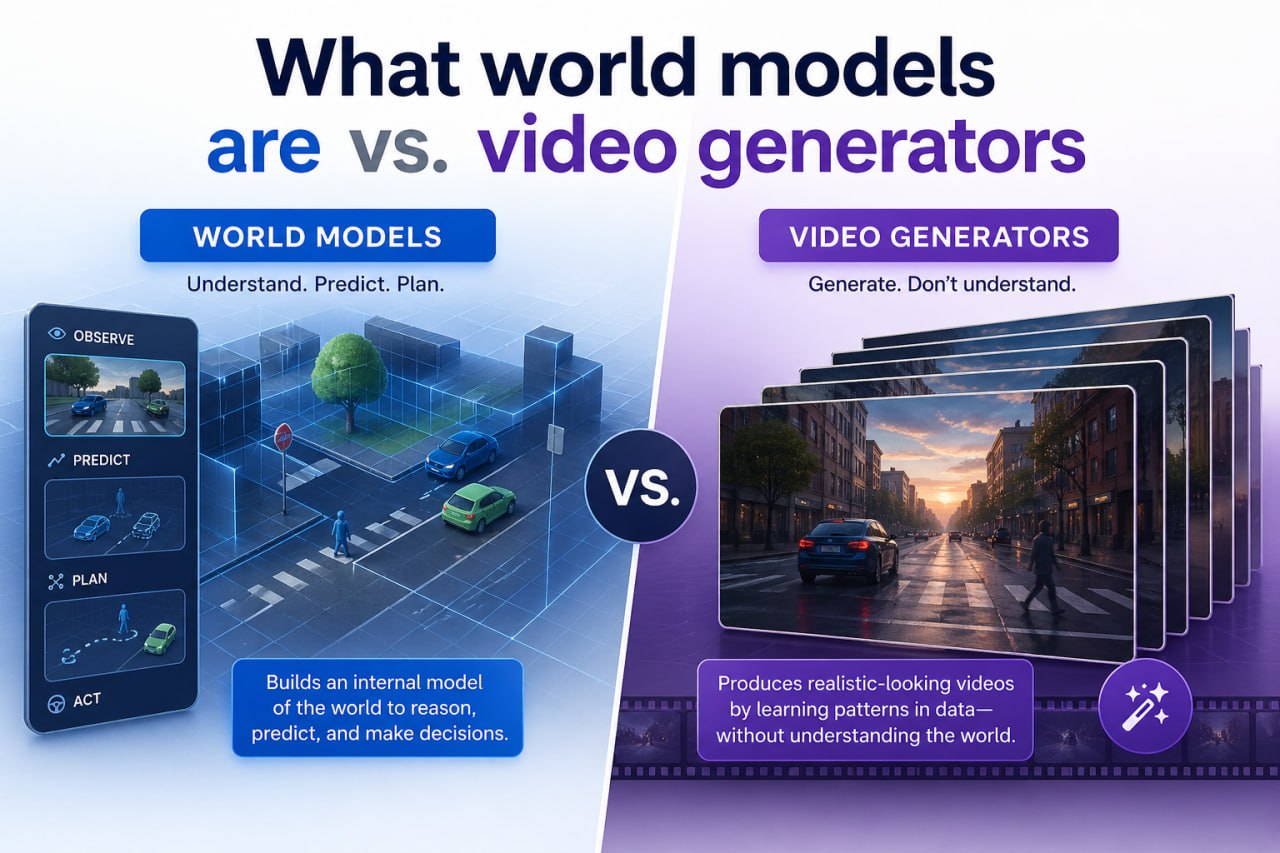

The term "world model" gets thrown around loosely, but the technical definition is precise. A world model is an AI system that learns to simulate how reality evolves over time. Not just what something looks like — but how it behaves. How objects move, fall, collide, and react to forces. How a scene changes when something new enters it.

This is fundamentally different from what Sora, Runway, and Veo do. Those are bidirectional diffusion models. They receive a prompt and generate the entire clip — past, present, and future — simultaneously. The output is fixed the moment generation starts. You cannot steer it mid-flight. You cannot interact with it. The story was written before you saw frame one.

Odyssey-2 Max is autoregressive. Like a language model that predicts the next word based on everything before it, Odyssey-2 Max predicts the next world state based on the current one — plus any actions you feed it. This makes it fundamentally interactive. It is less like watching a movie and more like playing inside a physics engine, except the "engine" is a neural network trained on physical reality at massive scale.

The practical result: Odyssey-2 Max can run coherent, interactive simulations for over 120 seconds without collapsing. Bidirectional models typically cap at 10 seconds before consistency breaks down. That is not a minor improvement. It is a different product category.

Benchmarks in AI are often misleading. A model can score well on a leaderboard by optimising for the metric rather than the underlying capability. The VBench 2 physics sub-task is different — it tests whether a model generates video that obeys the laws of physics as a human observer would recognise them.

Here is where Odyssey-2 Max stands:

That is not a marginal gain. Odyssey-2 Max improved on its own predecessor by nearly 9 points, and outpaced NVIDIA's best-published world model by more than 13 points. On the PAI-Bench physical sub-item — another physics compliance metric — it scored 93.02.

Why does physical accuracy improve so sharply in an autoregressive model? Because the architecture demands it. A bidirectional model can "cheat" — it can produce a plausible-looking clip without actually enforcing causal physical dynamics, because the whole sequence is generated at once. An autoregressive model cannot cheat. Each frame must follow physically from the last. Any mistake in physics compounds forward in time. The pressure to learn real dynamics is baked into the training objective itself.

The result is a model that does not just memoise what physics looks like. It learns to simulate it.

Odyssey-2 Max is built on an Autoregressive Diffusion Transformer (AR DiT) architecture — a hybrid that combines the sequential causal reasoning of autoregressive models with the high-fidelity output quality of diffusion-based generation.

Scale-wise, it is a significant commitment:

The architectural innovations that make real-time generation possible are equally significant:

Training was structured in three progressive stages: first, general visual dynamics to establish how the world evolves; second, interaction and task conditioning to make the model responsive to user inputs; and third, long-horizon stability training specifically to maintain coherence over extended simulation runs. Each stage builds directly on the previous one, which is why the model can sustain two-minute simulations where earlier attempts collapsed after seconds.

Odyssey uses a specific phrase to describe what they have built: pretrained physical intelligence. It is worth unpacking, because it carries real implications for where this technology goes next.

The analogy they draw is to a person who has spent years observing and interacting with the physical world but has not yet been trained on any specific task. They understand gravity. They understand friction. They understand how objects behave when dropped, pushed, or compressed. They have not been told what to do with that knowledge, but the foundation is there.

They compare the current state of Odyssey-2 Max to GPT-2 — the point just before large language models became truly transformative. That is a considered claim, not marketing hyperbole. GPT-2 was impressive but limited. What followed within 18 months was ChatGPT. If the trajectory of world models follows a similar curve, we are looking at systems within the next few years that can simulate arbitrary physical environments reliably enough to train real robots, test real products, and generate real training data for autonomous systems.

The key word in "pretrained physical intelligence" is pretrained. The base model is general. Fine-tuning it for a specific domain — a particular type of environment, a specific robotic task, a particular industrial scenario — becomes dramatically cheaper when the foundation already understands physics.

Odyssey-2 Max is currently in private beta for partners across five sectors. Each is worth examining individually, because the value proposition is different in each case.

Training robots in the real world is expensive, slow, and risky. You need physical hardware, physical space, and a tolerance for a lot of broken things during the learning phase. Simulation has always been the obvious alternative, but most simulators fail on one critical dimension: they do not accurately model the messy variability of the real world. Robots trained in clean simulators often struggle when deployed because the sim-to-real gap is too wide.

A world model trained on real-world video, achieving physics scores at the level Odyssey-2 Max has demonstrated, has the potential to close that gap significantly. The simulation is not a handcrafted physics engine — it is a learned model of how reality actually behaves. That is a fundamentally different foundation for robotics training.

Games have historically required massive manual effort to create interactive environments. Every physics interaction, every dynamic system, every reactive environment detail is handcrafted. World models introduce the possibility of procedurally generating environments that have not been explicitly authored — worlds that respond to player actions in ways the designers never specifically programmed, because the model has learned how physical reality responds to actions in general.

This is not a replacement for human creative direction. It is a tool that expands what a small team can build.

Testing self-driving cars and drones in the real world is constrained by safety, logistics, and cost. Existing simulation environments are improving but are still limited in their physical fidelity. A model that can generate photorealistic, physically consistent simulated environments — and respond interactively to agent decisions — could substantially increase the quality and quantity of training scenarios available for autonomous systems.

Beyond robotics and autonomous vehicles, there is a broad category of enterprise applications where interactive, physically realistic simulation would add value: industrial maintenance training, medical procedure simulation, architectural walkthroughs that respond to environmental inputs, and logistics planning in dynamic environments. Most of these use cases are currently served by purpose-built simulators that are expensive to build and rigid to operate. A general-purpose world model changes the economics.

There are a few things worth tracking as this technology develops.

API access and pricing. Odyssey has opened a developer API waitlist. How they price it will determine which use cases are economically viable in the near term. Real-time inference at this fidelity is compute-intensive, and the cost per simulation minute will matter enormously for applications like robotics training at scale.

The sim-to-real gap in practice. The benchmark scores are promising, but what matters for robotics specifically is whether robots trained in Odyssey-2 Max simulations actually perform better in the real world. That data does not yet exist publicly. Early partner results will be the key signal to watch.

Competitive responses. NVIDIA, Google DeepMind, and several other labs are actively working on world models. Odyssey's lead in the VBench physics metric is real, but the gap between state-of-the-art and second place tends to close quickly in this industry. The architecture and training methodology they have disclosed is detailed enough that larger players will study it carefully.

Longer horizons. 120 seconds is impressive. Many robotics and autonomous systems applications require simulations that run for minutes or hours. The KV cache architecture Odyssey has described could scale, but it has not been demonstrated publicly at those durations yet.

The development path for AI over the past five years has followed language. Bigger language models, better language models, language models applied to code, to reasoning, to agents. Language has been the primary medium because it is data-dense, well-structured, and maps cleanly to human-defined tasks.

World models are a different bet. They are a bet that the next significant capability jump comes from teaching AI to understand physical reality rather than language. That the path to general AI assistance runs through physics, not just text.

Whether that bet is right is not yet clear. But Odyssey-2 Max is the most compelling evidence so far that it might be. A system that genuinely simulates physical dynamics interactively, at scale, for over two minutes — and that outperforms NVIDIA's best-published attempt — is not a research curiosity. It is an early production system in a category that did not meaningfully exist 18 months ago.

The question for businesses is not whether world models will matter. It is how quickly the technology reaches the fidelity and accessibility threshold needed for your specific use case.

Ready to explore how AI simulation and automation can improve your business operations? Get in touch with us to discuss your AI strategy. We work with Australian businesses to identify where emerging AI capabilities can deliver practical, measurable value — without the hype.